Eliza Effect

Inspired by a 1960s chatbot and more relevant today than ever, this effect serves as a warning about the nature of human-AI relationships.

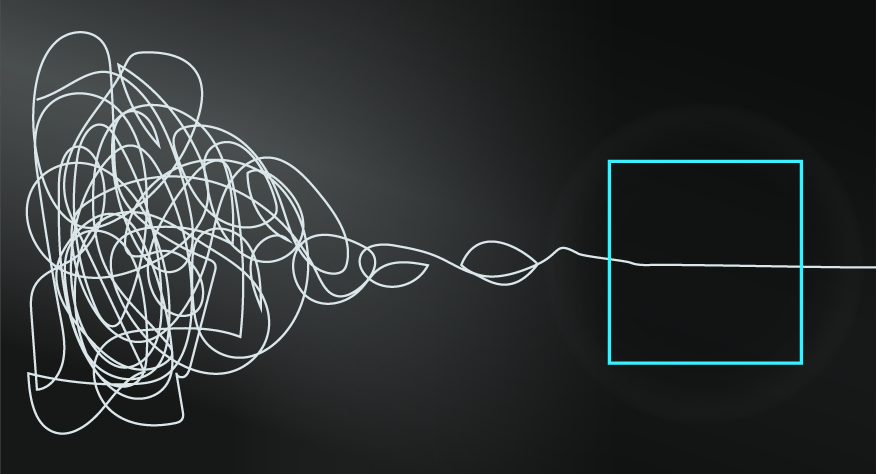

The Eliza Effect describes the human tendency to attribute human characteristics to non-human entities, thus anthropomorphising machines, computers, and things.

AI IS NOT REALLY INTELLIGENT, YET…

Let’s start by level-setting: ChatGTP, Bard, Bing and the broader wave of Large Language Models (LLMs) are generally not considered to be 'thinking' nor 'sentient'. Instead, LLMs draw on enormous data sets (literature, online content, social media etc) and use a process known as deep learning, a combination of algorithms and statistical models, to generate text based on pattern recognition and context.

You might consider them to be glorified autocomplete systems, but if you’ve been chatting with them, you’ll know that this doesn’t do them justice. Indeed, the web is filled with examples of people who are in fascinating conversations with LLMs akin to friends, trusted advisors, and even virtual lovers.

A HISTORY OF TALKING WITH MACHINES.

The misalignment between a computer's external output and what we assume is happening internally for them isn’t new. In 1966 MIT’s Joseph Weizenbaum created Eliza, a chatbot that mimicked a therapist's questions. Eliza was programmed to rephrase user statements as questions and have a few trigger words for blocks of questions, so if you said ‘my mother doesn’t like me,’ Eliza would ask ‘why do you think your mother doesn’t like you?’ In addition, the term ‘mother’ would prompt other questions like ‘tell me about your family life’. Eliza represented a very basic program by today’s standards, yet Weizenbaum was shocked at how users believed that the chatbot was listening to and cared about them, and how much they would reveal to it as a result (See Origins below for more).

BEWARE THE UNCANNY VALLEY.

LLMs are now developing at a rate that is reminiscent of Moore’s Law and, on a connected but different path, the development of robots is also accelerating. The combination of LLMs and robotics is set to further challenge our perceptions of artificial intelligence, but beware the Uncanny Valley.

The Uncanny Valley is a phenomenon first identified by Masahiro Mori, a Japanese professor of robotics. It describes the way humans might feel discomfort and even revulsion with robots or non-human entities that are almost, but not quite, human-like. Concretely, while an obviously synthetic and shiny robot might be accepted by humans, as would a robot that looked and behaved exactly like a human, a robot that almost looked and behaved like a human would likely instil negative reactions in us. Ironically, until that complete human-like appearance can be achieved, we’re likely to see more basic options that leverage the Eliza Effect for us to engage with them as humans.

THE DANGERS OF ANTHROPOMORPHISING THINGS.

The Eliza Effect might seem harmless when it simply involves naming and chatting with your car, but there are potential risks that are essential to keep in mind amidst current trends. Firstly, the Eliza Effect will likely lead you to overestimate or at least misinterpret the capabilities of chatbots and similar systems, which is problematic given their tendency to ‘hallucinate’ and return incorrect information. Perhaps more worrying, as Weizenbaum himself pointed out, the Eliza Effect means that you’re more likely to develop greater trust and deeper relationships with chatbots, which are almost universally run as commercial ventures. This worried Weizenbaum so much that he became an anti-AI campaigner, warning that the Eliza Effect would leave people vulnerable to governments and corporations behind AI systems.

Even if you don’t take on the extent of Weizenbaum’s fears, the rise of AI assistants and even AI partners is a reminder to consider the Eliza Effect to help you maintain boundaries around any content, secrets, and emotions that you might share with LLMs.