0 saved

0 saved

21.9K views

21.9K views

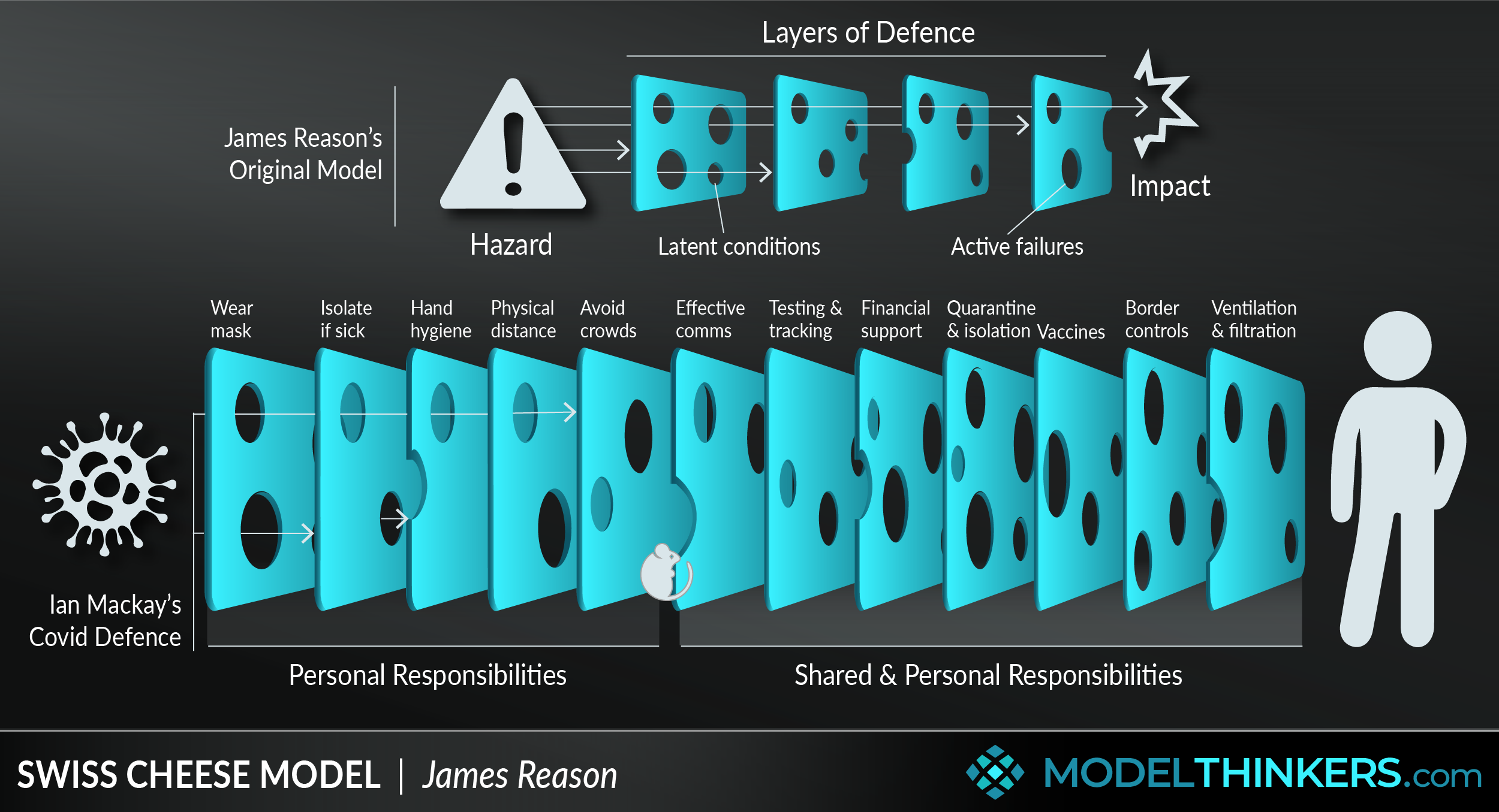

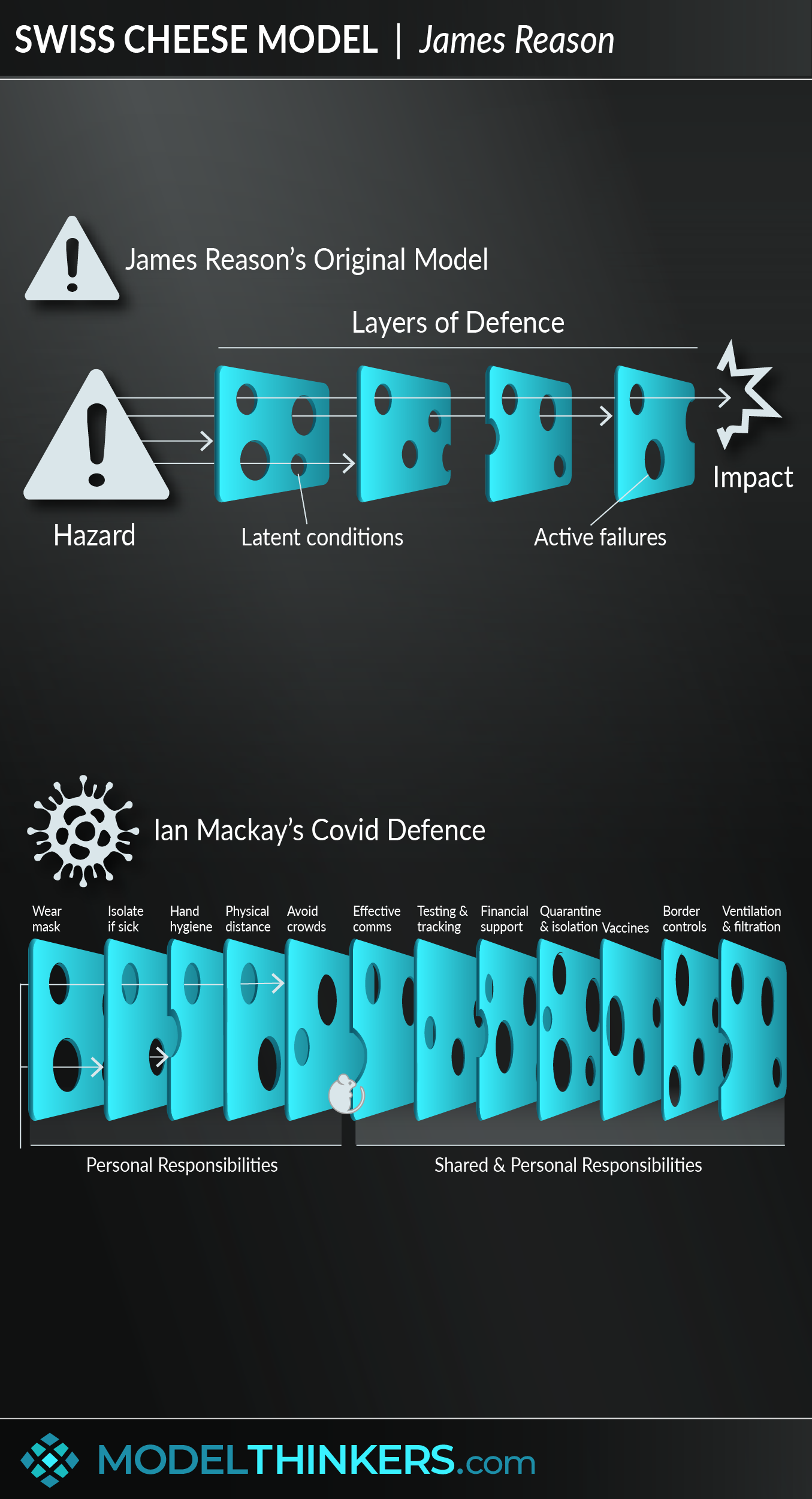

A popular model in risk management across domains as diverse as aerospace, healthcare, mining, and manufacturing, the Swiss Cheese Mo ... Lorem ipsum dolor sit amet, consectetur adipiscing elit. Sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur. Excepteur sint occaecat cupidatat non proident, sunt in culpa qui officia deserunt mollit anim id est laborum. Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt.

- Assume that human error will occur.

Reason’s work was premised on the id ...

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur. Excepteur sint occaecat cupidatat non proident, sunt in culpa qui officia deserunt mollit anim id est laborum.

Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt.

Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt.

Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt.

Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt.

Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt.

Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt.

Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt.

Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt.

Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt.

Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt.

Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt.

Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt.

Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt.

Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt.

Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt.

Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Curabitur pretium tincidunt lacus. Nulla gravida orci a odio, et viverra justo commodo id. Aliquam in felis sit amet augue laoreet fringilla. Suspendisse potenti. Sed in libero ut nibh placerat accumsan. Proin ac libero euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt. Aenean euismod, nisi vel consectetur interdum, nisl nisi cursus nisi, vitae tincidunt nisi nisl eget nisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Vivamus lacinia odio vitae vestibulum. Nulla facilisi. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo.

Nam sit amet erat euismod, tincidunt nisi a, tincidunt nunc. Sed sit amet ipsum non quam tincidunt tincidunt. Nulla facilisi. Donec vel libero nec justo tincidunt tincidunt. Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Integer in libero ut justo cursus tincidunt. Sed vitae libero sit amet dolor tincidunt tincidunt.

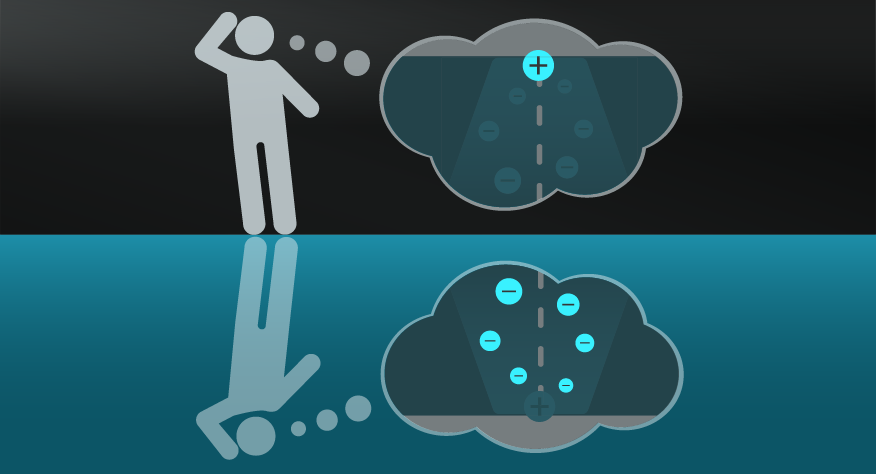

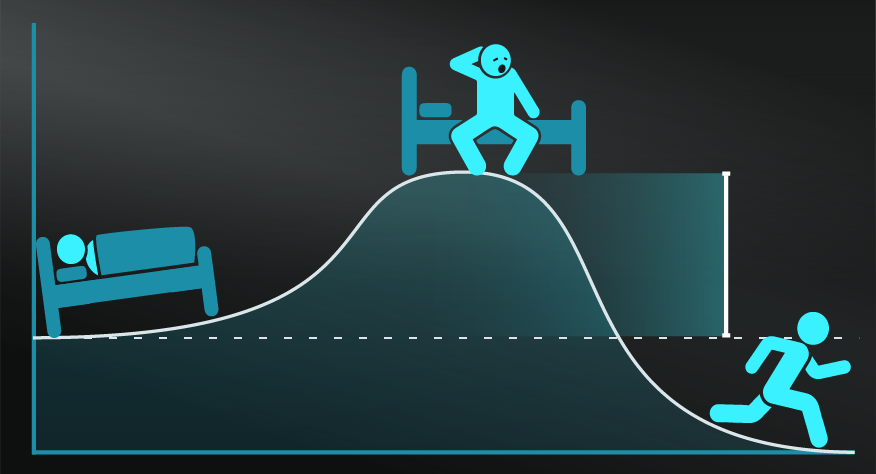

The metaphor of Swiss Cheese has clearly resonated in safety and accident domains, though criticism has persisted. One of the prime criticisms is the simplistic nature of the metaphor that leaves it too generic and without value. Many point to the fact that Reason himself tried to expand his work with subsequent diagrams and papers which have not persisted like the Swiss Cheese Model. At worst, it's seen as a reductionist approach that was born from his period working as a consultant, at best it's seen as a tool he used to communicate important concepts, albeit relatively superficially, to management.

For example, some would argue the metaphor presents accidents as a linear occurrence, while in reality, they occur in dynamic and non-linear ways. This links to a broader criticism that it lacks a systems and dynamic view of problems, implying that each component, like a slice of cheese, can be altered and even fixed in isolation.

Another issue with the original diagram is how it continues to be interpreted so differently by practitioners. While some would argue that its broad definition allows for diverse agreement and application, others point to studies of practitioners who were revealed to have different understandings of what the model represents and what it means as a result.

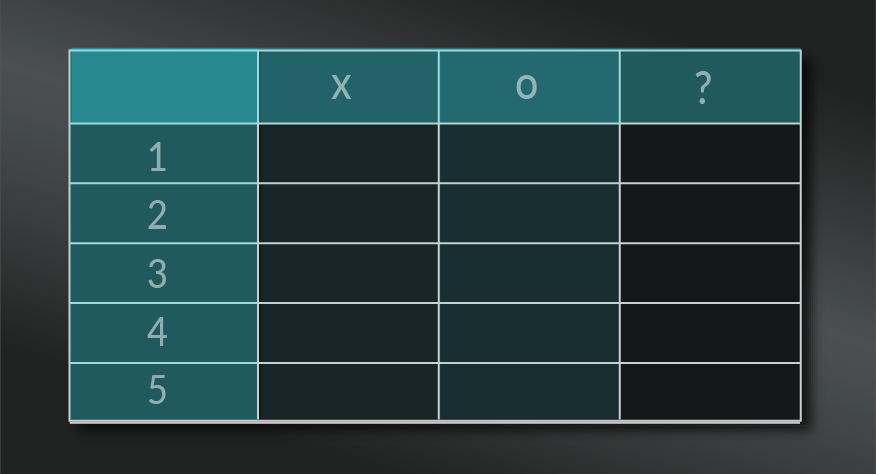

Covid.

Below is Australian Virologist Ian Mackay’s repurposed version of the Swiss Cheese Model as it was applied to Covid mitigation.

Bushfires.

Risk consultant Julian Talbot used this model to explain the devastation of the 2009 Australian bushfires in the diagram below.

Engineering.

Michigan Tech used this diagram to explore the safety elements in engineering, including a mitigation layer on the end.

d

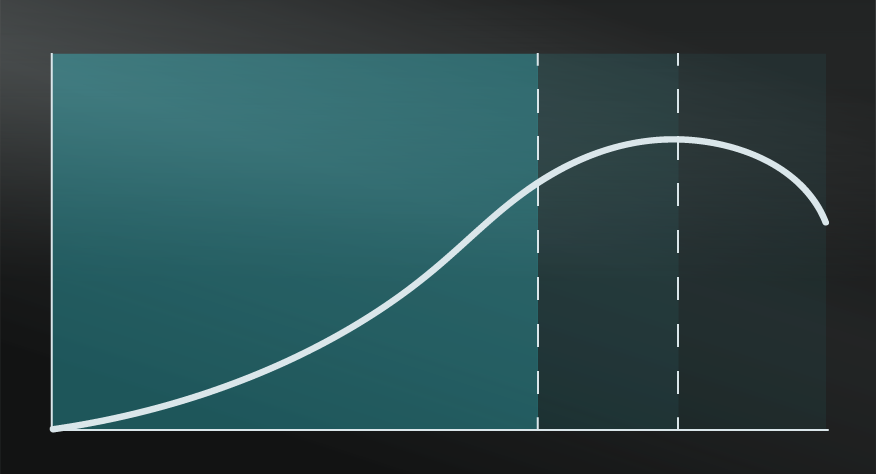

According to James Reason, his inspiration for this model came in the 1970s while he was making tea. He was distracted by his large insistent cat and absent-mindedly dolloped a large spoonful of cat food into the teapot. Reason was fascinated by the similarities of the tasks that led to his mistake and this deepened his research that culminated into his book A Life in Errors - From Little Slips to Big Disasters. He particularly was interested in the impact of mistakes with human-machine interaction, particularly in the high-stakes fields such as aerospace to nuclear power.

Others have noted that Reason had input from John Wreathall in developing what was essentially a building on traditional safety management thinking with an understanding of human error. Reason published the original work behind this model in 1990, then explored it more explicitly in the British medical journal in 2000, though it was several years before it was developed as the organisational accident model, and later known as the Swiss Cheese Model.

My Notes

My Notes

Oops, That’s Members’ Only!

Fortunately, it only costs US$5/month to Join ModelThinkers and access everything so that you can rapidly discover, learn, and apply the world’s most powerful ideas.

ModelThinkers membership at a glance:

“Yeah, we hate pop ups too. But we wanted to let you know that, with ModelThinkers, we’re making it easier for you to adapt, innovate and create value. We hope you’ll join us and the growing community of ModelThinkers today.”